At AWS re:Invent 2018 last November, AWS introduced a regional construct called Transit Gateway (TGW). AWS Transit Gateway allows customers to connect multiple Virtual Private Clouds (VPCs) together easily. TGW can be seen as a hub and all the VPCs can be seen as spokes in a hub and spoke-type model where any-to-any communication is made possible by traversing the TGW. TGW can replace the popular AWS Transit VPC design many customers have deployed prior for connecting multiple Virtual Private Clouds (VPCs) together. In this post, I will discuss TGW and how it can currently be used with VMware Cloud on AWS. At the end of this post, there’s also a video you can watch of a demo using the same setup described in this blog; feel free to jump to the video if you like.

VMware Cloud on AWS SDDC is leveraging familiar VMware technologies such as vSphere ESXi, vSAN, and NSX to provide a robust SDDC in the cloud. This SDDC, powered by the VMware stack, can also easily be connected to on-prem data centers and enable ease of migration and even vMotion from on-prem to cloud and vice-versa. To learn more about networking and security provided by NSX in VMware Cloud on AWS, read my prior blogs on the VMware Network Virtualization blog or even some of my prior posts here on LinkedIN. I also have some blog posts on my personal website, which I really need to update as I’ve fell behind due to lack of time. You’ll be seeing some good updates soon!

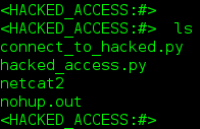

Today, customers can use TGW VPN attachments to connect to VMware Cloud on AWS SDDC. This VPN connectivty can be established leveraging route based IPSEC VPN. In the below diagram, you can see I have a TGW deployed and connected to both VMware Cloud on AWS SDDCs and native AWS VPCs.

My connections from TGW to VMware Cloud on AWS SDDCs leverage VPN attachments while my connections from TGW to native AWS VPCs leverage VPC attachments. VPN attachments can be used to connect to VMware Cloud on AWS SDDC from TGW; I leverage the route based IPSEC VPN capability provided by NSX for this. My connectivity to native AWS VPCs use VPC attachments and thus leverage the native underlying ENI connectivity to connect directly from VPC to TGW.

Right away, you can see there are two very clear advantages with TGW:

1.) Ease of creating Any-to-Any communications between native AWS VPCs and VMware Cloud on AWS SDDCs

2.) Connectivity to on-prem can be shared easily with all connecting VMware Cloud on AWS SDDCs and native AWS VPCs

By default, a TGW has one route table. However, it’s also possible to leverage multiple route tables to take advantage of functionality where you can do some traffic engineering by controlling routes between different route tables. You can have TGW attachments on the TGW associated with different route tables and then control traffic either via static routes or propagation. This is some pretty cool advanced functionality which I will cover in a later post in more detail.

In the above example, you can see I also have a VPN connection from TGW to my on-prem data center. AWS announced at re:Invent 2019 Direct Connect attachment to TGW is not yet supported but would be available sometime early 2019, so, as of right now, the only option for this design is VPN to on-prem. Because this VPN connection from on-prem is connecting to a TGW, it can be shared among all the spoke VMware Cloud on AWS SDDCs and native AWS VPCs. This is depicted more clearly in the diagram below.

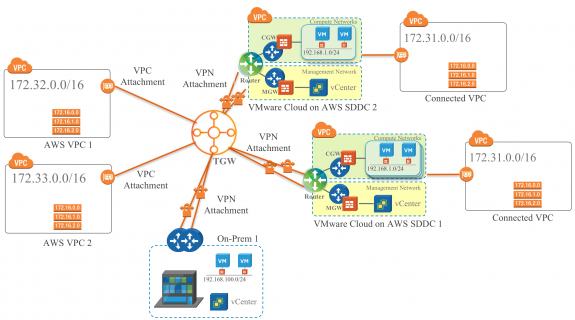

The above design provides both shared connectivity to-prem for all my spokes, but also easily provides any-to-any configuration and communication.

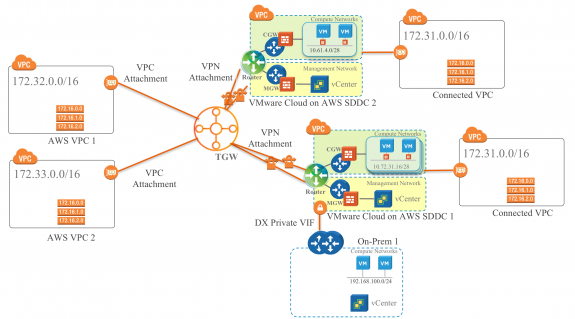

It’s possible some of the spokes may even have Direct Connect connectivity to on-prem, but still be connected to a TGW. One such use case is when workloads in a VMware Cloud on AWS SDDC needs to access shared services in multiple other VPCs/SDDCs. This design is shown below and is also what I have setup in my lab. This is the same setup I use in my demo.

The VMware Cloud on AWS SDDCs below are attached to TGW via VPN attachment. There are two IPSEC VPN tunnels in Active/Standby mode per VMware Cloud on AWS SDDC.

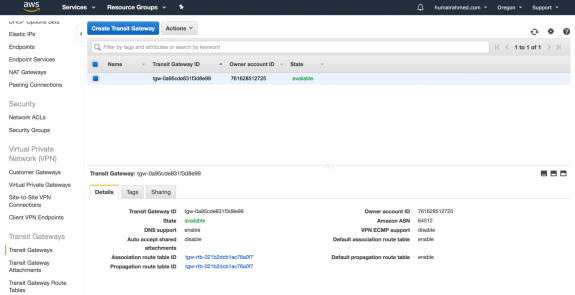

Reflecting the above diagram, you can see below in the screenshot from AWS Console, I have created a AWS Transit Gateway. Note, I’ve disabled ECMP as currently only Active/Standby VPN is supported with VMware Cloud on AWS.

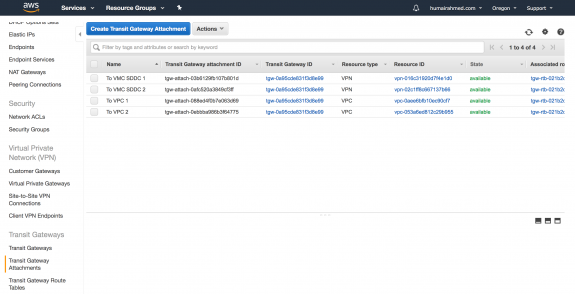

Below, you can see I created two VPN attachments, each connected to a different VMware Cloud on AWS SDDC. I also created two VPC attachments, each connected to a different native AWS VPC.

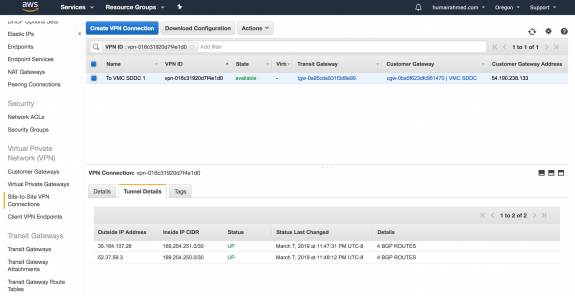

Clicking on the Resource ID of the first row shown above takes me to the VPN Connections I’ve established to my first VMware Cloud on AWS SDDC. I created a Customer Gateway in AWS which has the public IP Address for my VMware Cloud on AWS SDDC 1VPN. You can see below under Tunnel Details, AWS provided two public IP addresses and I selected two different Inside IP CIDR blocks to use for my virtual tunnel interfaces (VTI) interfaces which BGP will peer over; this occurs over the IPSEC VPN connection. You can see the tunnels are up. The routes are propagated and learned automatically by TGW and by VMware Cloud on AWS SDDC 1 via BGP.

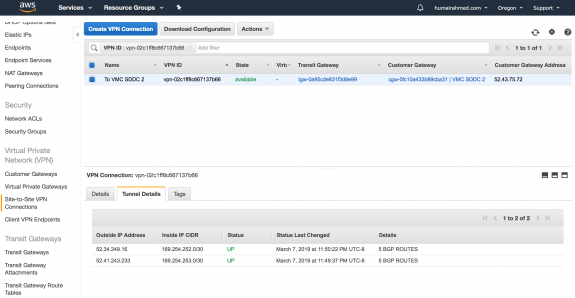

I used the same process to setup connectivity to my VMware Cloud on AWS SDDC 2. Notice, the Customer Gateway Address is different because I’m connecting to a different VMware Cloud on AWS SDDC. Also, note, I have two different AWS public IPs and selected different Inside IP CIDR blocks.

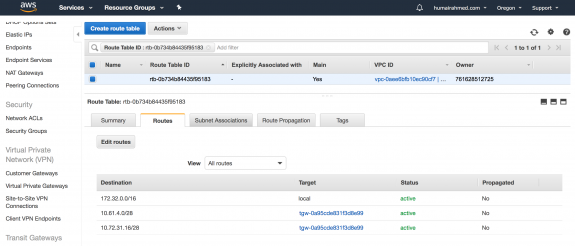

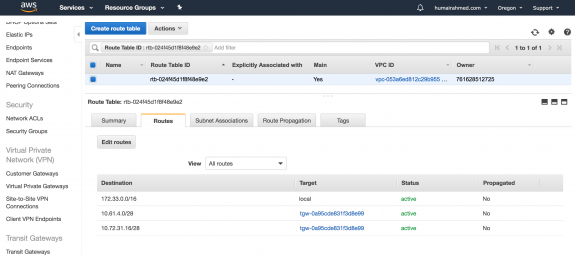

You can see below my subnet for VPC 1 is 172.32.0.0/16. For the respective VPC attachment, you can see below for my native AWS VPC 1, I manually created two route entries. To reach 10.72.31.16/28, which is the subnet of my App network segment in VMware Cloud on AWS SDDC 1, traffic is sent through the Transit Gateway I created, which you can see is the Target. To reach 10.61.4.0/28, which is the subnet of my Web network segment in VMware Cloud on AWS SDDC 2, traffic is also sent through the Transit Gateway.

With VPC attachments, the routes are not propagated from the TGW to the spoke VPCs. However, the routes from the VPCs are propagated to the TGW as propagation is enabled by default on the TGW.

You can see below, the subnet of VPC 2 is 172.33.0.0/16. I configured the route table in VPC 2 identically.

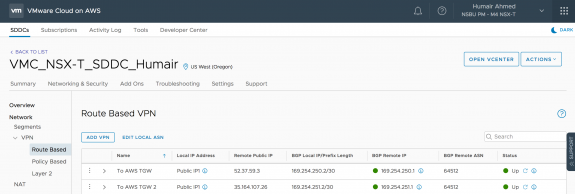

In my VMware Cloud on AWS SDDCs, I can also verify the respective VPN connections are up. Below is the screen shot from my VMware Cloud on AWS SDDC 1.

With some recent enhancements in VMware Cloud on AWS, you can now view Advertised Routes and see Learned Routes.

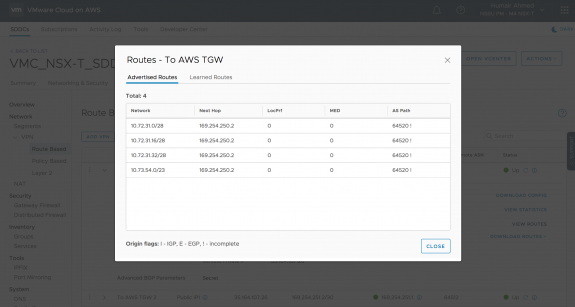

The below routes are being Advertised from VMware Cloud on AWS via BGP over VPN to AWS TGW.

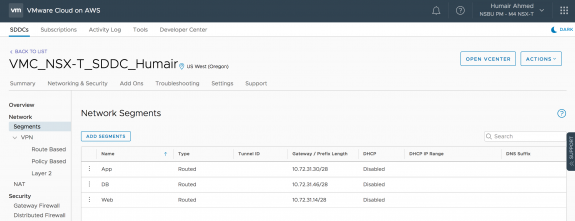

Below are the network segments in VMware Cloud on AWS SDDC 1. From the screen shot above, you can see these networks are being advertised to TGW.

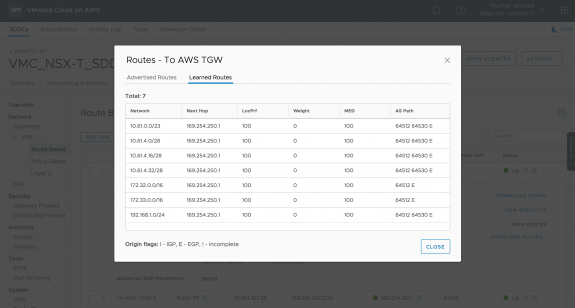

The below routes are being learned by VMware Cloud on AWS via BGP over VPN from AWS TGW. Note, the subnet from VMware Cloud on AWS SDDC 2 (10.61.4.0/28), native AWS VPC 1 (172.32.0.0/16), and native AWS VPC 2 (172.33.0.0/16) are all being learned via BGP over VPN from AWS TGW.

I confirm below from my App VM (10.72.31.17/28) on the App network segment in VMware Cloud on AWS SDDC 1 that I can communicate to workloads in VMware Cloud on AWS SDDC 2 (10.61.4.0/28), native AWS VPC 1 (172.32.0.0/16), and native AWS VPC 2 (172.33.0.0/16).

You can see how easy it is to setup connectivity from VMware Cloud on AWS SDDC to AWS TGW and enable Any-to-Any communication.

See the full demo here on the NSX YouTube channel or in the embedded video below.

Follow me on Twitter: @Humair_Ahmed

Twitter

Twitter LinkedIn

LinkedIn Youtube

Youtube RSS

RSS